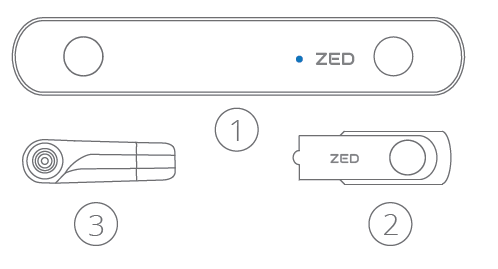

It provides access to stereo video passthrough, depth sensing, positional tracking, spatial mapping and object detection features from the ZED SDK. All objects at distances from ~10 cm out to infinity will be sharp. Introduction The ZED Plugin for Unreal allows developers to build AR/MR applications within Unreal Engine 5. It also adds support for point cloud-based spatial mapping, simpler Jetson installation process and major wrapper/plugin updates. The following tables report the Field of View at different resolutions for the rectified images: ZED SDK 2.8 introduces streaming ZEDs video feed across a network, turning ZED cameras into IP cameras. ( *) You can find a detailed explanation of this formula on Wikipedia. The field of view formula is the following:įov = 2*arctan(pixelNumber/(2*focalLength)) * (180/pi)( *) The exact focal length can vary depending on the selected resolution and your camera calibration. On Windows (SDK ≤ 2.2.0): C:/Users/YOUR_USER_NAME/AppData/Roaming/Stereolabs/settings/.On Windows: C:/ProgramData/Stereolabs/settings.You can retrieve the parameters of your camera by using the ZED API with getCameraInformation().camera_configuration.calibration_parameters.left_cam.Check the API documentation for more information.Ĭalibration files are stored in the following folders and named after each camera serial number: The cameras are factory-calibrated and their associated calibration is automatically downloaded when launching a Stereolabs application for the first time (tool, sample, etc.). OpenNI2-DIR -> use the path to the OpenNI2 library noted above (e.g. Run CMake ( cmake-gui ), select the folder where you cloned the repository as source and setup a build folder. This function is also called automatically by the destructor if necessary.Each Stereolabs camera has unique optical characteristics. Clone the ZED OpenNI2 repository using Git for Windows. This step is optional since the zed.close() will take care of disabling all the modules. Spatial Mapping The ZED continuously scans its surroundings and creates a 3D map of what it sees. Once the program is over the modules can be disabled and the camera closed. frame rate of the camera, up to 100 times per second in WVGA mode. Robotic Arms, Forklifts, Mobile Picking Robots. Transportation, Agricultural, Retail, Security, Defense. Last Mile Delivery, Agricultural, Security Drones. If you want to know what is inside here are. Popular among robotics engineers, the ZED camera provides vision to many types of robots. Grab ( ref runtimeParameters ) = ERROR_CODE. ZED is a stereo camera, this can be used to calculate a depth map through advanced image processing techniques. Optimized for mixed-reality and robotics, the ZED Mini features ew Ultra depth sensing mode, visual-inertial technology for improved motion tracking and compact design for simpler integration. How does it work The ZED camera outputs a high resolution side-by-side color video on USB 3.0. The ZED Mini is a lightweight depth and motion sensing camera. Using its two eyes and through triangulation, the ZED understand its surroundings and create a three-dimensional model of the scene it observes. detectionConfidenceThreshold = 40 Objects objects = new Objects () // Grab new frames and detect objects while ( zed. The ZED is a passive stereovision based camera that reproduces the way human vision works. The RC2014 Zed consists of the following modules z80 2.1 CPU Module. The RC2014 Zed Pro consists of the same modules, but supplied with a 12 slot enhanced backplane. Set runtime parameter confidence to 40 ObjectDetectionRuntimeParameters obj_runtime_parameters = new ObjectDetectionRuntimeParameters () obj_runtime_parameters. The design is simple, and the standard 0.1' pitch headers encourages building your own add-ons. The SDK 3.0 also introduces new neural depth sensing, improved. The first time the module is used, the model will be optimized for the hardware and will take more time. ZED SDK 3.0 is the first release that supports the brand new ZED 2 camera, improved in every way with wider angle optics, more accurate factory calibration, built-in new generation environmental and motion sensors and industrial grade mounting system and enclosure. Then we can start the module, it will load the model.

EnablePositionalTracking ( ref trackingParams ) enableObjectTracking = true // Object tracking requires the positional tracking module // Enable positional tracking PositionalTrackingParameters trackingParams = new PositionalTrackingParameters () // If you want to have object tracking you need to enable positional tracking first err = zedCamera. imageSync = true // Enable tracking to detect objects across time and space object_detection_parameters. HUMAN_BODY_FAST // Run detection for every Camera grab object_detection_parameters. Define the Objects detection module parameters BodyTrackingParameters object_detection_parameters = new BodyTrackingParameters () // Different models can be chosen, optimizing the runtime or the accuracy object_detection_parameters.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed